The Advantages Of Uninterruptible Power Supply

We depend greatly on our computers. Whether for business or personal use, our computers hold a lot of valuable information which enables us to fulfill tasks of all sorts. We

We look forward to assisting you

We depend greatly on our computers. Whether for business or personal use, our computers hold a lot of valuable information which enables us to fulfill tasks of all sorts. We

When constructing a building as important as a data center, you want to be able to rely on a company that can offer you the best quality. Data centers are

If you are in need of a quality UPS unit, uninterrupted power source, then you need to find a company that is experienced in providing exceptional equipment for large-scale business

To Embed This Infographic On Your Website Copy & Paste The Code Below: Click Image to Enlarge<br /> <a href=”http://blog.titanpower.com/2012/09/understanding-costs-reasons-for-data-center-outages”><img src=”http://www.titanpower.com/public/images/data-center-outages-reasons.png” style=”width:600px”/></a><br />Source: <a href=”http://www.titanpower.com/blog/understanding-costs-reasons-for-data-center-outages/”>Understanding Costs & Reasons For Data

If you are planning to design and construct a new data center or just renovating your old data center, there are some things that you need to keep in mind

It isn’t hard to imagine what the outcome would be if a mission critical facility lost power for even a minute. With so much depending on those significant businesses, it

Life is unpredictable and can produce unexpected circumstances. Hurricanes, lightning and wind storms, and tornadoes can result in damage to your computer systems and destroy important data. Although you can’t

There will be times when the normal supply of power for your electrical equipment will no longer be sufficient. It could be that too many items are plugged in, that

Stuff, stuff, stuff. It is one common factor that all businesses deal with. And it is all useful stuff, too, because if it was not, it could be thrown away.

Just like disposal engineers actually turn out to be what used to be called garbage men, the name power distribution strips is sort of a fancy way of saying plug

Does your business run into power failure problems? Have power outages become a big irritant and complaint in your business? It is vital that your business finds a mission critical

Power protection is extremely important in keeping IT assets protected from power loss issues. Even small outages are a problem to most companies and businesses. Losing power, even only for

When designing a data center there are more things to think about than just simply putting servers and racks in a room and naming it the data center. There are

In a world that is run heavily by technology, a data center outage can bring disastrous results. In recent years, internet users have suffered through many significant data center outages

If you are a person or a company starting up a data center, whether it is starting from scratch or whether it has just grown in bits and pieces and

If you are interested in having a data center for your company or a group of companies, designing such a thing may be well beyond your technological skill level. After

Since the invention of the computer and the age of technology have taken place, computers are now an absolute necessity for nearly every business and company to function and compete

Proper Computer Room Air Conditioning Can Keep Data Center Equipment Running At Optimum Performance. Any time that computer system equipment is contained in a typical sized server room, the environment

When Power Outages Happen, It Pays To Have A 24/7 Data Center Maintenance Plan. It would be a big help for businesses of all types if the power company could

How Proper Computer Room Air Conditioning Can Keep Data Center Equipment Running At Optimum Performance Any time that computer system equipment is contained in a typical sized server room, the

Whether you have a fairly standard data center, or one that is designed uniquely for your business, you still need to have a plan in place for regular maintenance of

Your uninterruptible power supply (UPS) is your emergency plan to keep your business running in the event of a power failure, and one of the most critical components of that

With the world relying on electricity you may have found yourself suffering from the loss of power. With an uninterruptible power supply you will never have to suffer again. The

If you’ve ever lost important information or even potential business because of a power outage or utility failure, you might consider purchasing an uninterruptible power supply. Almost every business or

One of the most important planning considerations for your data center is getting the right uninterruptible power supply (UPS)—after all, without power, your data center is useless. The common industry

Large and small data centers have or are connected to server systems, UPS, computer rooms, networked computers, and many other electronics and equipment. All of those large and small businesses

Even when you have put every preventative measure in place, your data center could become site of fires or flooding or other unpredictable emergencies. If this happens, then you want

These are pictures of Valve Regulated Lead Acid (VRLA) batteries that were damaged by excessive heat buildup in the battery causing “Thermal Runaway.” The most typical application for VRLA batteries

Modern companies all over the world that rely on large scale technology and complex support systems to maintain the operation of critical loads must have a way to prevent anything

Today’s data centers are vastly different from ten, five, and even one year ago, and the proper installation of power distribution units (PDUs) is an exercise in both addressing your

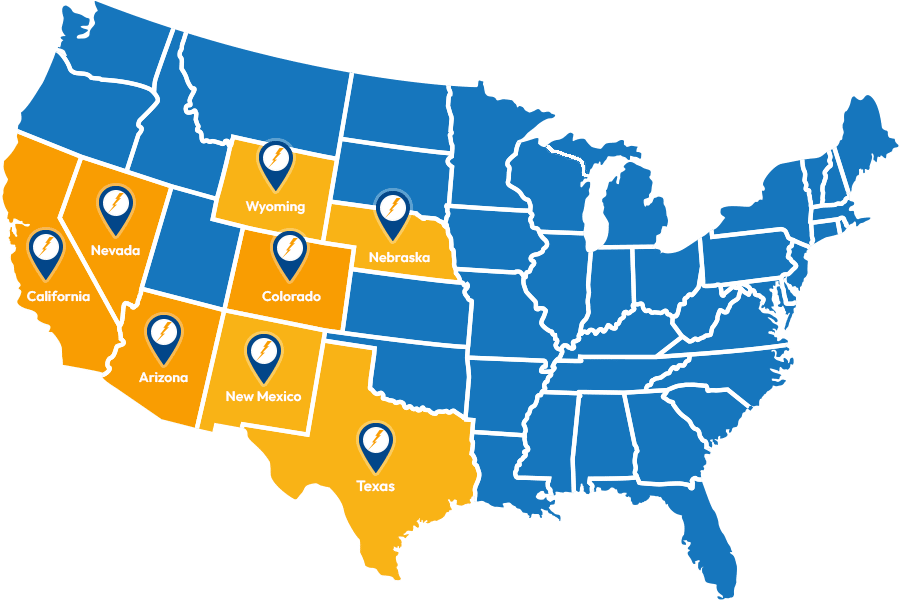

Service Locations

Expanded Service Area

Useful Links

Contact Information