Server Room Fire Suppression Best Practices

Data centers must delicately balance the need for infrastructure and equipment that runs all day and maximizes uptime with the need to manage heat and fire risk associated with electronic

We look forward to assisting you

Data centers must delicately balance the need for infrastructure and equipment that runs all day and maximizes uptime with the need to manage heat and fire risk associated with electronic

Every data center utilizes a UPS – Uninterruptible Power Supply – to ensure that power is always available, even in there is a power interruption. Minimizing downtime while maximizing energy

There are few things more important to a data center than continuous power. Without it, a data center will experience prolonged downtime, significant financial loss, a damaged reputation and other

IT and OT – though they are two different things, the previous tendency to “divide and conquer” when it came to strategy, management and solutions is going away. When it

Technology is evolving minute by minute and data centers must work to keep up with the lightening-paced evolution. We have discussed the Internet of Things (IOT) before – the world

Whether you operate a data center or any other business, business continuity is incredibly important. We all think we are immune to disaster but the reality is, if you have

Stringent security protocols are one of the most important aspects of properly running any data center. With constant, round-the-clock advancements in technology, the focus of security protocols is often on

Aside from security, maximizing uptime is likely the top priority of just about any data center, regardless of size, industry or any other factors. Most businesses today run on data

Cloud computing, in one form or another, is here and it is not going anywhere. It is for a good many reasons – it provides easy scalability, is less expensive

The recently established Data Center Optimization Initiative (DCOI) is an important mandate for federal data centers that encourages the sharing of information to encourage optimization of infrastructure and reduce inefficiency

Every year we see certain trends arise in data center design and construction and, as 2016 winds down; we are able to take a look back at the year and

Ask just about any client what one of the most important things they are looking for in a data center is and you will likely hear, “security” over and over

Data center power consumption is evolving all the time, becoming more efficient but, generally, growing. While many data centers are making green initiatives and finding ways to make their energy

“Renewable energy.” “Clean energy.” These may sound like buzzwords – trendy little catchphrases meant to grab your attention and sound good but they are far more than buzzwords, they are

Every business can experience information and data silos on some level, particularly when various applications and systems must communicate with each other. But, these silos are particularly evident, costly, and

Enterprise data centers may be a dying breed. Today we are seeing more and more data centers opt for colocation over enterprise data centers because of the high cost and

The tech industry loves to uses catchy phrases to describe various processes, innovations and aspects in data centers. Every now and then, we think it is important to narrow in

From time to time we see new “catchphrases” or terminology pop up in the tech world and suddenly they are being used everywhere. One of these phrases is “the internet

If there is one thing that a data center is concerned with, aside from maximizing uptime, it is security. In today’s world we constantly hear news stories about security breaches

In today’s data center world, there is a lot of discussion over increasing rack density, utilizing the space you have without having to relocate, and more. Working with the space

WAN, wide-area networks, may have not been prioritized in the past but more and more data center managers are closely looking at WANs as the future of data centers. WAN

When you think about “protection” in a data center, you probably think about protecting critical data, protecting infrastructure, protecting uptime, etc. But, it is also important to think about protecting

Data centers function with a continuous goal of maximizing uptime. It is important to avoid outages at all cost while constantly trying to improve energy efficiency and maximize data storage

What will the data center look like in 5 years or even 10 years? It may sound impossible to predict but experts are weighing in and providing their predictions for

Data center cooling is a topic that could be discussed endlessly. What works best for one data center may not work well for another depending on a variety of factors

Security Risks In the wake of many high profile data breaches, from government institutions to retailers, there is an evolving environment in the data management world. An environment that requires

Winter is soon approaching and with it comes the concern of not just managing the drop in temperature, but also managing the low humidity that comes with it. Ensuring temperature

The most underrated force that leads to downtime or inefficiency in the data center is personnel. Even when systems are functioning optimally human error can lead to unexpected consequences due

Providing energy to servers is a substantial part of a data center’s costs. In many cases this is due to servers running consistently at peak performance in preparation for peak

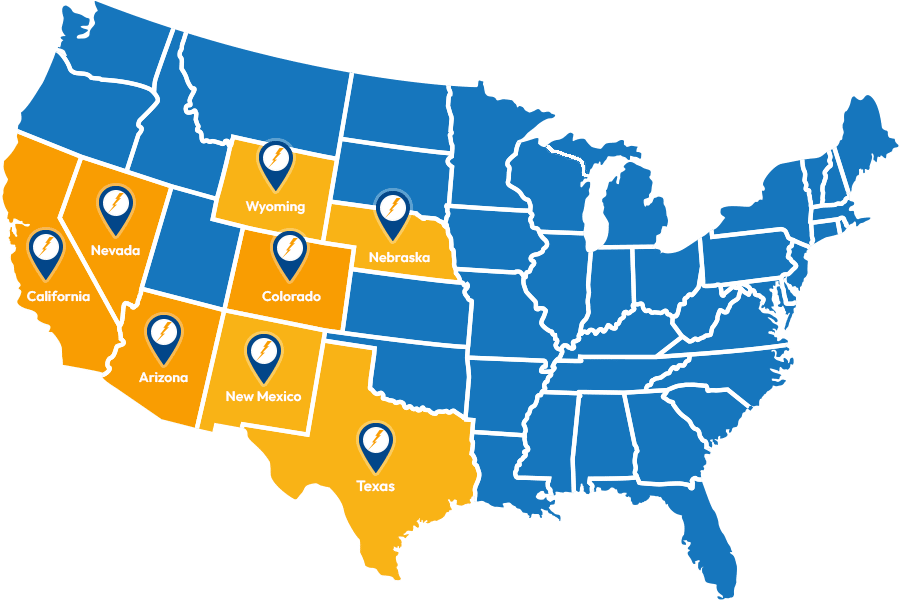

With the increase in remotely performed operations, and the need for less work crew on site due to automated procedures, there are a wide range of locations that can be

Service Locations

Expanded Service Area

Useful Links

Contact Information