Industrial Machines Are Coming To The Cloud

According to Gartner, Inc 20.8 billion objects will have connectivity by 2020. The Internet of Things has been highly spoken of as the force behind this predicted growth in devices

We look forward to assisting you

According to Gartner, Inc 20.8 billion objects will have connectivity by 2020. The Internet of Things has been highly spoken of as the force behind this predicted growth in devices

Ensuring hardware is protected from unexpected physical consequences is an essential and often overlooked part of maintaining a data center. The strategies below will reduce the risk of downtime and

As the Internet of Things (IoT) continues to develop, the wisdom of converged infrastructure is making more and more sense. The basic idea behind it, developed during the early days

According to the Gartner Group, provider of technology related information and statistics, $5,600 a minute is the average cost of data center downtime, which amounts to a whopping $336,000 per

Liquid cooling CPUs to enhance performance by enhancing the disposal of heat waste is not a novel concept. In one form or another, it has been growing in popularity for

For a number of years now, the move among IT departments at most major corporations has been toward consolidation, and much of the development of cloud infrastructure has revolved around

It is clear at this point that cloud computing is here to stay, with most industries not only moving toward the enthusiastic adoption of cloud applications for day-to-day operations, outsourced

Many people who work with computers on a daily basis have developed at least a passing acquaintance with the cloud. Most IT professionals are usually more knowledgeable than the average

Steps to Optimize the Flash Storage in Your Data Center As flash-based storage becomes a larger and larger part of the marketplace for data storage solutions, new processes that take

The first and probably most blanket answer to this conundrum is actually fairly simple: since biometric processes are inherently hardware-focused, they don’t really scale effectively, because large-scale networks wind

One of the fastest growing new technologies is network function virtualization, also known as NFV. First introduced in 2012, this modern approach to network services has the potential to

With the modern day shift towards digitization of data and processes, IT departments worldwide are being called upon to rapidly grow their service catalog to include all the new technologies

Cloud computing is the process of using an off-site network of computers or servers to process and store data. Cloud computing is often seen as the solution to all local

One of the most important aspects of any data center is its reliability. In order to keep everything running smoothly, it’s imperative that maintenance teams and operations managers prepare for

The rate of change for data storage needs in the cloud is huge. In an example from a research paper from Google – YouTube users upload 400 hours of video

The internet has connected us all. It has made it possible for people and computers anywhere in the world to communicate. We want to be able to work from

How (And Why) IT Facilities Should Reduce Energy Consumption One of the single biggest users of electricity in a given organization is usually the IT department. Cutting back on this

Computer room air conditioning (CRAC) systems are critical to maintaining computing equipment efficiency and longevity, and therefore maintaining your data center’s CRAC system is critical as well. A recent

When it comes to addressing customer demands, many technological companies are turning to Hyperconverged Infrastructure (HCI). Somewhat primitive at first, these technologies have evolved over the past few years, allowing

The trend in technology these days seems to be to migrate all related applications to a single source. This seems intuitive given that everyone would love to be able to

As more and more businesses depend on their data centers for continued operation, outages can cost thousands of dollars for every minute that the center is offline. Even though the

From smart home applications to vast online commercial empires, today’s leading industries are increasingly dependent on extensive data networks. Unlike previous years, when data was generated and stored on using

It isn’t talked about much, but tape is more important in today’s world of exploding data collection and storage than it was decades ago. Enterprise tape is now able to

Given the importance that immediate access to information carries in today’s business world, being able to rely upon your data center for round-the-clock reliability is vital. Unfortunately, completely uninterrupted support

Over the past few decades, virtually every single aspect of the data center has changed. This massive shift in architecture is largely fueled by the applications that run these centers.

You need to keep your office running 24/7, and that means critical infrastructure has to remain online and accessible. Just same, everything ages and as components wear down, you are

If you are own or are on the senior management staff of a business that uses a data center, mark your calendar for March 14 through March 18. Mark Evanko,

When your plan A fails, your UPS device is your emergency backup. Reliability and efficiency is vital. As data center technology has rapidly evolved, many companies have relied on essentially

The storage industry is rapidly evolving at every level. It is not just the technology itself which is undergoing constant transformation, but the very ways in which it is distributed,

A data center is an important part of any technologically oriented business. Finding the right amount of storage space (not too much or too little) and monitoring power usage effectiveness

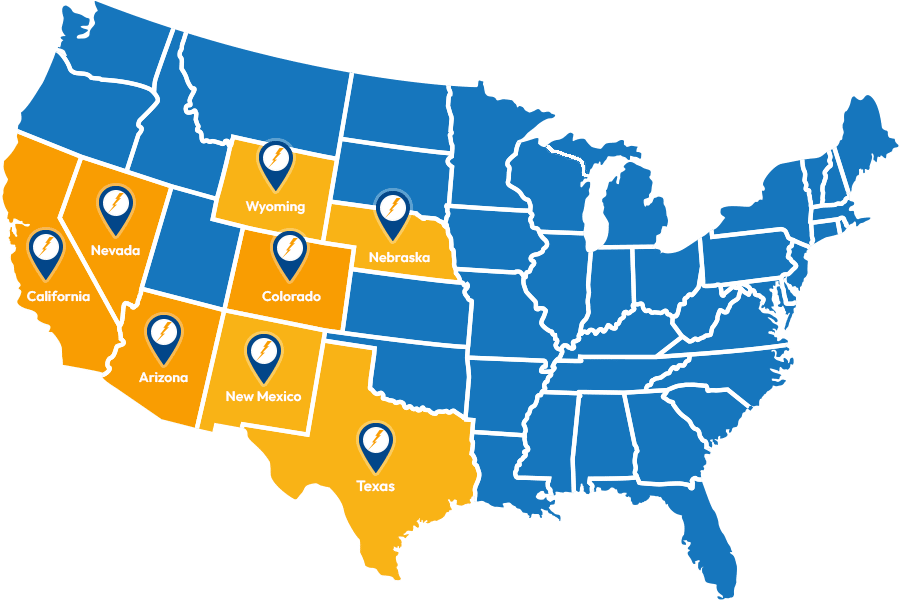

Service Locations

Expanded Service Area

Useful Links

Contact Information